That is Jessica. Null outcomes are laborious to take. This may increasingly appear very true whenever you preregistered your evaluation, since technically you’re on the hook to come clean with your dangerous expectations or research design! How embarrassing. No marvel some authors can’t seem to give up hope that the original hypotheses were true, at the same time as they admit that the evaluation they preregistered didn’t produce the anticipated results. Different authors take another route, one which deviates extra dramatically from the acknowledged targets of preregistration: they bury points of that pesky unique plan and as a substitute proceed beneath the guise that they preregistered no matter post-hoc analyses allowed them to spin a great story. I’ve been seeing this so much currently.

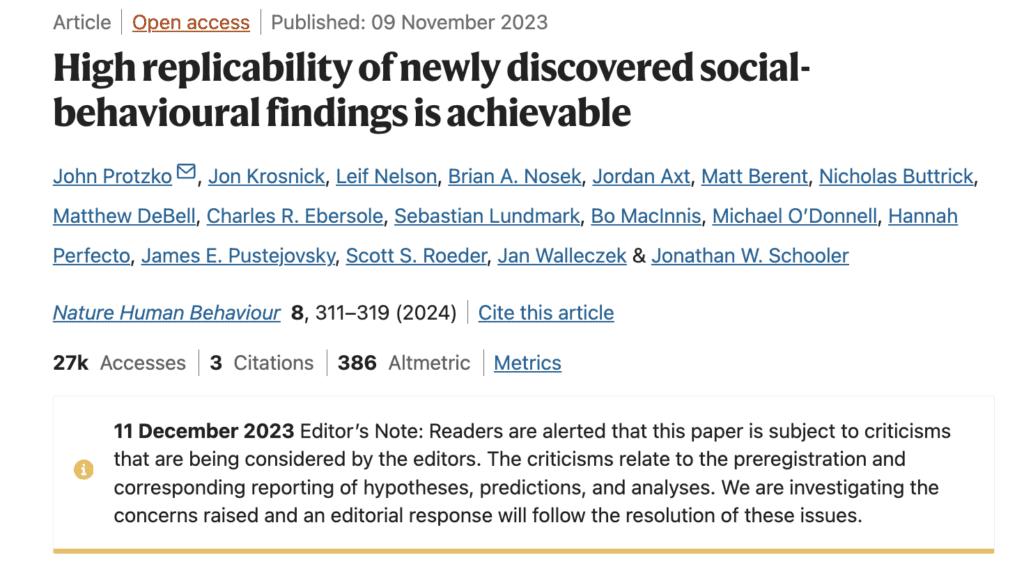

On that notice, I need to comply with up on the earlier blog discussion on the 2023 Nature Human Conduct article “High replicability of newly discovered social-behavioural findings is achievable” by Protzko, Krosnick, Nelson (of Knowledge Colada), Nosek (of OSF), Axt, Berent, Buttrick, DeBell, Ebersole, Lundmark, MacInnis, O’Donnell, Perfecto, Pustejovsky, Roeder, Walleczek, and Schooler. It’s been about 4 months since Bak-Coleman and Devezer posted a critique that raised quite a few questions in regards to the validity of the claims the paper makes.

This was a research that requested 4 labs to establish (via pilot research) 4 results for potential replication. The identical lab then did a bigger (n=1500) preregistered affirmation research for every of their 4 results, documenting their course of and sharing it with three different labs, who tried to copy it. The originating lab additionally tried a self-replication for every impact.

The paper presents analyses of the estimated results and replicability throughout these research as proof that 4 rigor-enhancing practices used within the post-pilot research–confirmatory checks, giant pattern sizes, preregistration, and methodological transparency–result in excessive replicability of social psychology findings. The noticed replicability is claimed to be greater than anticipated based mostly on noticed impact sizes and energy estimates, and notably greater than prior estimates of replicability within the psych literature. All checks and analyses are described as preregistered, and, in line with the summary, the excessive replication fee they observe “justifies confidence of rigour-enhancing strategies to extend the replicability of latest discoveries.”

On the floor it seems to be an all-around win for open science. The paper has already been cited over fifty times. From a fast look, many of those citing papers check with it as if it supplies proof of a causal impact that open practices result in excessive replicability.

However one of many questions raised by Bak-Coleman and Devezer in regards to the revealed model was about their declare that all the confirmatory analyses they current had been preregistered. There was no such preregistration in sight in case you checked the offered OSF hyperlink. I remarked again in November that even in the very best case state of affairs the place the lacking preregistration was discovered, it was nonetheless miserable and ironic {that a} paper whose message is in regards to the worth of preregistration might make claims about its personal preregistration that it couldn’t again up at publication time.

Round that point, Nosek mentioned on social media that the authors had been in search of the preregistration for the primary outcomes. Shortly after Nature Human Conduct added a warning label indicating an investigation of the work:

It’s been some months, and the revealed model hasn’t modified (past the added warning), nor do the authors seem to have made any subsequent makes an attempt to answer the critiques. Given the “open science works” message of the paper, the excessive profile creator record, and the optimistic consideration it’s acquired, it’s price discussing right here in barely extra element how a few of these claims appear to have come about.

The unique linked venture repository has been up to date with historic information for the reason that Bak-Coleman and Devezer critique. By clicking via the varied variations of the evaluation plan, evaluation scripts, and variations of the manuscript, we are able to principally watch the narrative in regards to the work (and what was preregistered) change over time.

The first analysis plan is dated October 2018 by OSF, and descriptions a set of analyses of a decline impact, the place results lower after an preliminary research, that differ considerably from the story introduced within the revealed paper. This doc first describes an information assortment course of for every of the affirmation research and replications in two halves that splits the gathering of observations into two elements, with 750 observations collected first, and the opposite 750 second. Every affirmation research and replication research are additionally assigned to both a) analyze the primary half-sample after which the second half-sample or b) analyze the second half-sample after which the primary half-sample.

There have been three deliberate checks:

- Whether or not the results statistically considerably enhance or lower relying on whether or not the results belonged to the primary or the second 750 half samples;

- Whether or not the impact sizes of the originating lab’s self-replication research is statistically bigger or smaller than the originating lab’s affirmation research.

- Whether or not results statistically considerably lower or enhance throughout all 4 waves of information assortment (all 16 research with all 5 confirmations and replications).

For those who haven’t already guessed it, the aim of all that is to guage whether or not a supernatural-like impact resulted in a decreased impact dimension in no matter wave was analyzed second. It seems all that is motivated by hypotheses that some of the authors (okay, perhaps simply Schooler) felt were within the realm of possibility. There isn’t any point out of evaluating replicability within the unique evaluation plan nor the the preregistered analysis code uploaded final December in a dump of historic information by James Pustejovsky, who seems to have performed the position of a consulting statistician. That is regardless of the blanket declare that each one analyses in the primary textual content had been preregistered and additional description of those analyses within the paper’s complement as confirmatory.

The unique intent didn’t go unnoticed by one of many reviewers (Tal Yarkoni) for Nature Human Conduct, who remarks:

The one trace I can discover as to what’s happening right here comes from the next sentence within the supplementary strategies: “If observer results trigger the decline impact, then whichever 750 was analyzed first ought to yield bigger impact sizes than the 750 that was analyzed second”. This would appear to suggest that the precise motivation for the blinding was to check for some apparently supernatural impact of human statement on the outcomes of their analyses. On its face, this would appear to represent a blatant violation of the legal guidelines of physics, so I’m truthfully undecided what extra to say about this.

It’s additionally clear that the outcomes had been analyzed in 2019. The primary public presentation of outcomes from the person affirmation research and replications might be traced to a talk Schooler gave at the Metascience 2019 conference in September, the place he presents the outcomes as proof of an incline impact. The definition of replicability he makes use of isn’t the one used within the paper.

There are many clues within the obtainable information that recommend the primary message about rigor-enhancing practices emerged because the decline results evaluation above failed to point out the hypothesized impact. For instance, there’s a touch upon an early model of the manuscript (March 2020) the place the multi-level meta-analysis mannequin used to investigate heterogeneity throughout replications is usually recommended by James. That is instructed after knowledge assortment has been achieved and preliminary outcomes analyzed, however the evaluation is introduced as confirmatory within the paper with p-values and dialogue of significance. As additional proof that it wasn’t preplanned, in a historical file added more recently by James, it’s described as exploratory. It reveals up later within the main analysis code with some extra deviations, now not designated as exploratory. By the subsequent model of the manuscript, it has been labeled a confirmatory evaluation, as it’s within the remaining revealed model.

That is fairly clear proof that the paper isn’t precisely portraying itself.

Equally, numerous definitions of replicability present up in earlier variations of the manuscript: the speed at which the replication is important, the speed at which the replication impact dimension falls throughout the confirmatory research CI, and the speed at which replications produce important outcomes for important confirmatory research. These which produce greater charges of replicability relative to statistical energy are retained and people with decrease charges are both moved to the complement, dismissed, or not explored additional as a result of they produced low values. For instance, defining replicability utilizing overlapping confidence intervals was moved to the complement and never mentioned in the primary textual content, with the earliest model of the manuscript (Deciphering the Decline Effect P6_JEP.docx) justifying its dismissal as a result of it “produced the ‘worst’ replicability charges” and “performs poorly when unique research and replications are pre-registered.” Statistical energy can also be recalculated throughout revisions to align with the brand new narrative.

In a revision letter submitted previous to publication (Decline Effect Appeal Letterfinal.docx), the authors inform the reviewers they’re burying the supernatural motivation for research:

Reviewer 1’s fourth level raised quite a few points that had been complicated in our description of the research and analyses, together with the excellence between a affirmation research and a self-replication, the aim and use of splitting samples of 1500 into two subsamples of 750, the blinding procedures, and the references to the decline impact. We revised the primary textual content and SOM to handle these issues and enhance readability. The quick reply to the aim of many of those options was to design the research a priori to handle unique prospects for the decline impact which are on the fringes of scientific discourse.

There’s extra, like a file where they appeared to try a whole bunch of different models in 2020 after the earliest offered draft of the paper, obtained some various outcomes, and by no means disclose it within the revealed model or complement (at the least I didn’t see any point out). However I’ll cease there for now.

C’mon child I’m gonna inform the reality and nothing however the fact

It appears clear that the dishonesty right here was in service of telling a compelling story about one thing. I’ve seen issues like this transpire loads of instances: the aim of getting revealed results in makes an attempt to discover a good story in no matter outcomes you bought. Mixed with the looks of rigor and a great status, a researcher might be rewarded for work that on nearer inspection includes a lot post-hoc interpretation that the preregistration appears principally irrelevant. It’s not shocking that the story right here finally ends up being one which we’d count on a few of the authors to think about a priori.

Might or not it’s that the authors had been pressured by reviewers or editors to alter their story? I see no proof of that. Actually, the identical reviewer who famous the disparity between the unique evaluation plan and the revealed outcomes inspired the authors to inform the actual story:

I gained’t go as far as to say that there might be no utility in any way in subjecting such a speculation to scientific check, however on the very least if that is certainly what the authors are doing, I feel they need to be clear about that in the primary textual content, in any other case readers are more likely to misunderstand what the blinding manipulation is meant to perform, and are liable to drawing incorrect conclusions

It’s humorous to me how little consideration the warning label or the a number of factors raised by Bak-Coleman and Devezer (which I’m merely concretizing right here) have drawn, given the zeal with which some members of open science crowd strike to reveal questionable practices in different work. My guess is that is due to the feel-good message of the paper and the status of the authors. The dearth of consideration appears selective, which is a part of why I’m citing some particulars right here. It bugs me (although doesn’t not shock me) to assume that whether or not questionable practices get known as out relies on who precisely is within the creator record.

On some degree, the findings the paper presents – that in case you use giant research and try and get rid of QRPs, you may get a excessive fee of statistical significance – are very unsurprising. So why care if the analyses weren’t precisely determined upfront? Can’t we simply name it sloppy labeling and transfer on?

I care as a result of if deception is going on overtly in papers revealed in a revered journal for behavioral analysis by authors who’re perceived as champions of rigor, then we nonetheless have a really lengthy method to go. Deciphering this paper as a win for open science, as if it cleanly estimated the causal impact of rigor-enhancing practices isn’t, for my part, a win for open science. The authors’ lack of concern for labeling exploratory evaluation as confirmatory, their try and spin the null findings from the meant research right into a end result about results on replicability regardless that the definition they use is unconventional and seems to have been chosen as a result of it led to a better worth, and the seemingly selective abstract of prior replication charges from the literature must be acknowledged because the paper accumulates citations. At this level months have handed and there haven’t been any amendments to the paper, nor admission by the authors that the revealed manuscript makes false claims in regards to the preregistration standing. Why not simply come clean with it?

It’s irritating as a result of my very own methodological stance has been positively impacted by a few of these authors. I worth what the authors name rigor-enhancing practices. In our experimental work, my college students and I routinely use preregistration, we do design calculations by way of simulations to decide on pattern sizes, we try and be clear about how we arrive at conclusions. I need to consider that these practices do work, and that the open science motion is devoted to honesty and transparency. But when papers just like the Nature Human Conduct article are what folks take into account after they laud open science researchers for his or her makes an attempt to carefully consider their proposals, then now we have issues.

There are lots of classes to be drawn right here. When somebody says all of the analyses are preregistered, don’t simply settle for them at their phrase, no matter their status. One other lesson that I feel Andrew beforehand highlighted is that researchers typically kind alliances with others which will have totally different views for the sake of affect however this will result in compromised requirements. Huge collaborative papers the place you’ll be able to’t make certain what your co-authors are as much as ought to make all of us nervous. Dishonestly isn’t well worth the citations.

Writing inspiration from J.I.D. and Mereba.

That is Jessica. Null outcomes are laborious to take. This may increasingly appear very true whenever you preregistered your evaluation, since technically you’re on the hook to come clean with your dangerous expectations or research design! How embarrassing. No marvel some authors can’t seem to give up hope that the original hypotheses were true, at the same time as they admit that the evaluation they preregistered didn’t produce the anticipated results. Different authors take another route, one which deviates extra dramatically from the acknowledged targets of preregistration: they bury points of that pesky unique plan and as a substitute proceed beneath the guise that they preregistered no matter post-hoc analyses allowed them to spin a great story. I’ve been seeing this so much currently.

On that notice, I need to comply with up on the earlier blog discussion on the 2023 Nature Human Conduct article “High replicability of newly discovered social-behavioural findings is achievable” by Protzko, Krosnick, Nelson (of Knowledge Colada), Nosek (of OSF), Axt, Berent, Buttrick, DeBell, Ebersole, Lundmark, MacInnis, O’Donnell, Perfecto, Pustejovsky, Roeder, Walleczek, and Schooler. It’s been about 4 months since Bak-Coleman and Devezer posted a critique that raised quite a few questions in regards to the validity of the claims the paper makes.

This was a research that requested 4 labs to establish (via pilot research) 4 results for potential replication. The identical lab then did a bigger (n=1500) preregistered affirmation research for every of their 4 results, documenting their course of and sharing it with three different labs, who tried to copy it. The originating lab additionally tried a self-replication for every impact.

The paper presents analyses of the estimated results and replicability throughout these research as proof that 4 rigor-enhancing practices used within the post-pilot research–confirmatory checks, giant pattern sizes, preregistration, and methodological transparency–result in excessive replicability of social psychology findings. The noticed replicability is claimed to be greater than anticipated based mostly on noticed impact sizes and energy estimates, and notably greater than prior estimates of replicability within the psych literature. All checks and analyses are described as preregistered, and, in line with the summary, the excessive replication fee they observe “justifies confidence of rigour-enhancing strategies to extend the replicability of latest discoveries.”

On the floor it seems to be an all-around win for open science. The paper has already been cited over fifty times. From a fast look, many of those citing papers check with it as if it supplies proof of a causal impact that open practices result in excessive replicability.

However one of many questions raised by Bak-Coleman and Devezer in regards to the revealed model was about their declare that all the confirmatory analyses they current had been preregistered. There was no such preregistration in sight in case you checked the offered OSF hyperlink. I remarked again in November that even in the very best case state of affairs the place the lacking preregistration was discovered, it was nonetheless miserable and ironic {that a} paper whose message is in regards to the worth of preregistration might make claims about its personal preregistration that it couldn’t again up at publication time.

Round that point, Nosek mentioned on social media that the authors had been in search of the preregistration for the primary outcomes. Shortly after Nature Human Conduct added a warning label indicating an investigation of the work:

It’s been some months, and the revealed model hasn’t modified (past the added warning), nor do the authors seem to have made any subsequent makes an attempt to answer the critiques. Given the “open science works” message of the paper, the excessive profile creator record, and the optimistic consideration it’s acquired, it’s price discussing right here in barely extra element how a few of these claims appear to have come about.

The unique linked venture repository has been up to date with historic information for the reason that Bak-Coleman and Devezer critique. By clicking via the varied variations of the evaluation plan, evaluation scripts, and variations of the manuscript, we are able to principally watch the narrative in regards to the work (and what was preregistered) change over time.

The first analysis plan is dated October 2018 by OSF, and descriptions a set of analyses of a decline impact, the place results lower after an preliminary research, that differ considerably from the story introduced within the revealed paper. This doc first describes an information assortment course of for every of the affirmation research and replications in two halves that splits the gathering of observations into two elements, with 750 observations collected first, and the opposite 750 second. Every affirmation research and replication research are additionally assigned to both a) analyze the primary half-sample after which the second half-sample or b) analyze the second half-sample after which the primary half-sample.

There have been three deliberate checks:

- Whether or not the results statistically considerably enhance or lower relying on whether or not the results belonged to the primary or the second 750 half samples;

- Whether or not the impact sizes of the originating lab’s self-replication research is statistically bigger or smaller than the originating lab’s affirmation research.

- Whether or not results statistically considerably lower or enhance throughout all 4 waves of information assortment (all 16 research with all 5 confirmations and replications).

For those who haven’t already guessed it, the aim of all that is to guage whether or not a supernatural-like impact resulted in a decreased impact dimension in no matter wave was analyzed second. It seems all that is motivated by hypotheses that some of the authors (okay, perhaps simply Schooler) felt were within the realm of possibility. There isn’t any point out of evaluating replicability within the unique evaluation plan nor the the preregistered analysis code uploaded final December in a dump of historic information by James Pustejovsky, who seems to have performed the position of a consulting statistician. That is regardless of the blanket declare that each one analyses in the primary textual content had been preregistered and additional description of those analyses within the paper’s complement as confirmatory.

The unique intent didn’t go unnoticed by one of many reviewers (Tal Yarkoni) for Nature Human Conduct, who remarks:

The one trace I can discover as to what’s happening right here comes from the next sentence within the supplementary strategies: “If observer results trigger the decline impact, then whichever 750 was analyzed first ought to yield bigger impact sizes than the 750 that was analyzed second”. This would appear to suggest that the precise motivation for the blinding was to check for some apparently supernatural impact of human statement on the outcomes of their analyses. On its face, this would appear to represent a blatant violation of the legal guidelines of physics, so I’m truthfully undecided what extra to say about this.

It’s additionally clear that the outcomes had been analyzed in 2019. The primary public presentation of outcomes from the person affirmation research and replications might be traced to a talk Schooler gave at the Metascience 2019 conference in September, the place he presents the outcomes as proof of an incline impact. The definition of replicability he makes use of isn’t the one used within the paper.

There are many clues within the obtainable information that recommend the primary message about rigor-enhancing practices emerged because the decline results evaluation above failed to point out the hypothesized impact. For instance, there’s a touch upon an early model of the manuscript (March 2020) the place the multi-level meta-analysis mannequin used to investigate heterogeneity throughout replications is usually recommended by James. That is instructed after knowledge assortment has been achieved and preliminary outcomes analyzed, however the evaluation is introduced as confirmatory within the paper with p-values and dialogue of significance. As additional proof that it wasn’t preplanned, in a historical file added more recently by James, it’s described as exploratory. It reveals up later within the main analysis code with some extra deviations, now not designated as exploratory. By the subsequent model of the manuscript, it has been labeled a confirmatory evaluation, as it’s within the remaining revealed model.

That is fairly clear proof that the paper isn’t precisely portraying itself.

Equally, numerous definitions of replicability present up in earlier variations of the manuscript: the speed at which the replication is important, the speed at which the replication impact dimension falls throughout the confirmatory research CI, and the speed at which replications produce important outcomes for important confirmatory research. These which produce greater charges of replicability relative to statistical energy are retained and people with decrease charges are both moved to the complement, dismissed, or not explored additional as a result of they produced low values. For instance, defining replicability utilizing overlapping confidence intervals was moved to the complement and never mentioned in the primary textual content, with the earliest model of the manuscript (Deciphering the Decline Effect P6_JEP.docx) justifying its dismissal as a result of it “produced the ‘worst’ replicability charges” and “performs poorly when unique research and replications are pre-registered.” Statistical energy can also be recalculated throughout revisions to align with the brand new narrative.

In a revision letter submitted previous to publication (Decline Effect Appeal Letterfinal.docx), the authors inform the reviewers they’re burying the supernatural motivation for research:

Reviewer 1’s fourth level raised quite a few points that had been complicated in our description of the research and analyses, together with the excellence between a affirmation research and a self-replication, the aim and use of splitting samples of 1500 into two subsamples of 750, the blinding procedures, and the references to the decline impact. We revised the primary textual content and SOM to handle these issues and enhance readability. The quick reply to the aim of many of those options was to design the research a priori to handle unique prospects for the decline impact which are on the fringes of scientific discourse.

There’s extra, like a file where they appeared to try a whole bunch of different models in 2020 after the earliest offered draft of the paper, obtained some various outcomes, and by no means disclose it within the revealed model or complement (at the least I didn’t see any point out). However I’ll cease there for now.

C’mon child I’m gonna inform the reality and nothing however the fact

It appears clear that the dishonesty right here was in service of telling a compelling story about one thing. I’ve seen issues like this transpire loads of instances: the aim of getting revealed results in makes an attempt to discover a good story in no matter outcomes you bought. Mixed with the looks of rigor and a great status, a researcher might be rewarded for work that on nearer inspection includes a lot post-hoc interpretation that the preregistration appears principally irrelevant. It’s not shocking that the story right here finally ends up being one which we’d count on a few of the authors to think about a priori.

Might or not it’s that the authors had been pressured by reviewers or editors to alter their story? I see no proof of that. Actually, the identical reviewer who famous the disparity between the unique evaluation plan and the revealed outcomes inspired the authors to inform the actual story:

I gained’t go as far as to say that there might be no utility in any way in subjecting such a speculation to scientific check, however on the very least if that is certainly what the authors are doing, I feel they need to be clear about that in the primary textual content, in any other case readers are more likely to misunderstand what the blinding manipulation is meant to perform, and are liable to drawing incorrect conclusions

It’s humorous to me how little consideration the warning label or the a number of factors raised by Bak-Coleman and Devezer (which I’m merely concretizing right here) have drawn, given the zeal with which some members of open science crowd strike to reveal questionable practices in different work. My guess is that is due to the feel-good message of the paper and the status of the authors. The dearth of consideration appears selective, which is a part of why I’m citing some particulars right here. It bugs me (although doesn’t not shock me) to assume that whether or not questionable practices get known as out relies on who precisely is within the creator record.

On some degree, the findings the paper presents – that in case you use giant research and try and get rid of QRPs, you may get a excessive fee of statistical significance – are very unsurprising. So why care if the analyses weren’t precisely determined upfront? Can’t we simply name it sloppy labeling and transfer on?

I care as a result of if deception is going on overtly in papers revealed in a revered journal for behavioral analysis by authors who’re perceived as champions of rigor, then we nonetheless have a really lengthy method to go. Deciphering this paper as a win for open science, as if it cleanly estimated the causal impact of rigor-enhancing practices isn’t, for my part, a win for open science. The authors’ lack of concern for labeling exploratory evaluation as confirmatory, their try and spin the null findings from the meant research right into a end result about results on replicability regardless that the definition they use is unconventional and seems to have been chosen as a result of it led to a better worth, and the seemingly selective abstract of prior replication charges from the literature must be acknowledged because the paper accumulates citations. At this level months have handed and there haven’t been any amendments to the paper, nor admission by the authors that the revealed manuscript makes false claims in regards to the preregistration standing. Why not simply come clean with it?

It’s irritating as a result of my very own methodological stance has been positively impacted by a few of these authors. I worth what the authors name rigor-enhancing practices. In our experimental work, my college students and I routinely use preregistration, we do design calculations by way of simulations to decide on pattern sizes, we try and be clear about how we arrive at conclusions. I need to consider that these practices do work, and that the open science motion is devoted to honesty and transparency. But when papers just like the Nature Human Conduct article are what folks take into account after they laud open science researchers for his or her makes an attempt to carefully consider their proposals, then now we have issues.

There are lots of classes to be drawn right here. When somebody says all of the analyses are preregistered, don’t simply settle for them at their phrase, no matter their status. One other lesson that I feel Andrew beforehand highlighted is that researchers typically kind alliances with others which will have totally different views for the sake of affect however this will result in compromised requirements. Huge collaborative papers the place you’ll be able to’t make certain what your co-authors are as much as ought to make all of us nervous. Dishonestly isn’t well worth the citations.

Writing inspiration from J.I.D. and Mereba.