JHVEPhoto/iStock Editorial by way of Getty Photos

Intel (NASDAQ:INTC) gave its DCAI business update on Wednesday. The update was nicely acquired by buyers and INTC inventory moved up by about 9% within the final couple of days – though a part of the run-up was macro-driven buoyed by Micron Know-how’s (MU) stock commentary.

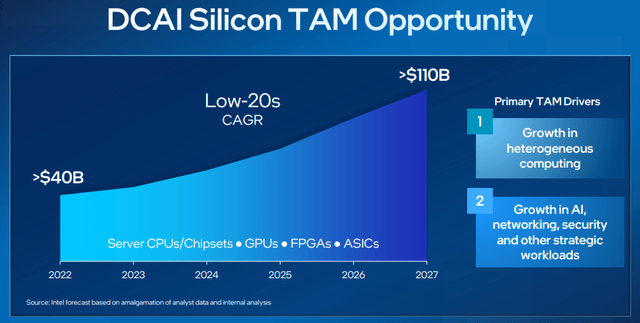

As has change into frequent currently, Intel’s pitches begin with a TAM narrative. It’s because rising TAM is vital in a world the place market share positive aspects are unlikely. The TAM estimates within the picture under look affordable – particularly contemplating the AI megatrend underway.

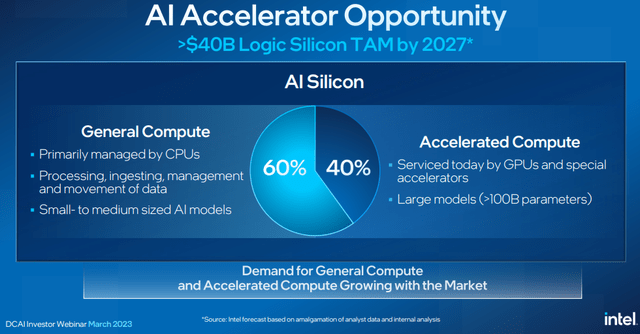

The extra questionable a part of Intel’s presentation was its view of the AI silicon market (picture under). Intel expects 60% of AI silicon alternative to be in CPUs and 40% to be in accelerators. By way of the compute workloads, we all know {that a} lion’s share of the coaching is being finished on GPUs and accelerators. This leaves inference as the primary battlefield between CPUs and GPUs. Intel claims that Xeon dominates in inference for small to midsize inference fashions as much as 10 billion parameters. One other Intel argument is that CSPs could transfer to accelerators however enterprises will probably use CPUs for inference. Moreover, Intel’s declare is that almost all AI workloads, resembling information processing and evaluation, are general-purpose workloads that run greatest on CPUs for a number of technical and financial causes that embrace the ubiquity of the x86 structure.

These look like affordable arguments however the 60/40 CPU/Accelerator break up proven within the picture is unlikely until Intel’s claims maintain and a lot of the inference is finished on CPUs as a substitute of GPUs or accelerators.

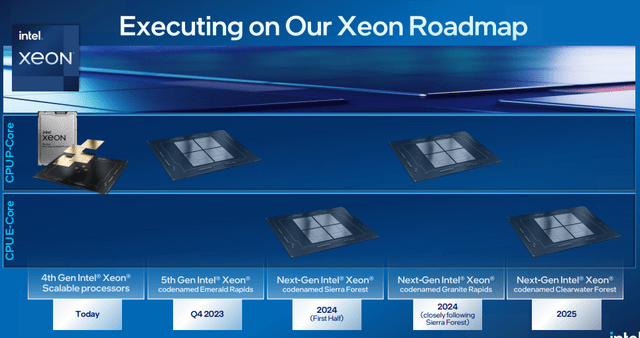

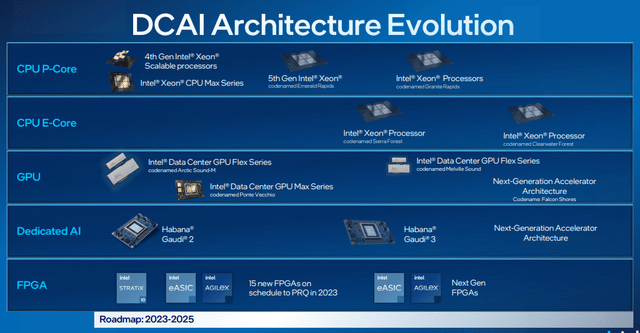

The principle thrust of the enterprise replace was on the product entrance. Intel laid out its new CPU product roadmap by means of 2025 (picture under). The important thing to notice right here is that Intel is displaying progress and dedication to its two-pronged server CPU technique utilizing two totally different CPU cores: The efficiency cores or P-Cores are optimized for top single-thread efficiency for mainstream functions. These are below the “Rapids” household. The effectivity cores or E-Cores are optimized for top throughput functions that profit from excessive core density (as a substitute of peak single-thread efficiency). These chips are below the “Forest” product household.

The market and plenty of analysts probably discovered this roadmap engaging as a result of, for the primary time in a few years, Intel seems to have a considerably credible aggressive roadmap.

Most likely one of the best information for buyers within the brief time period is the comparatively sturdy adoption of Sapphire Rapids. Regardless of being very late into the market and competing unfavorably with competitors on most metrics, administration commented that the Firm is seeing broad adoption throughout the enterprise, cloud service suppliers, comms service suppliers, OEMs, and ODMs. Administration famous that the highest 10 CSPs are deploying Sapphire Rapids and the Firm now has over 200 designs transport at the moment from all main OEMs and ODMs. To make sure, this can be a stronger adoption than anticipated for this troubled product and it’s unclear how a lot of this is because of Sapphire Rapids being a part of Nvidia (NVDA) Hopper reference design. On the flip aspect, the quantity appears to be on the lighter aspect with administration commenting on being on monitor to ship 1 million Sapphire Rapids models by midyear.

Trying previous Sapphire Rapids, as mentioned under, this roadmap is way much less spectacular than it might seem to uncritical buyers. Allow us to begin with every product on the timeline.

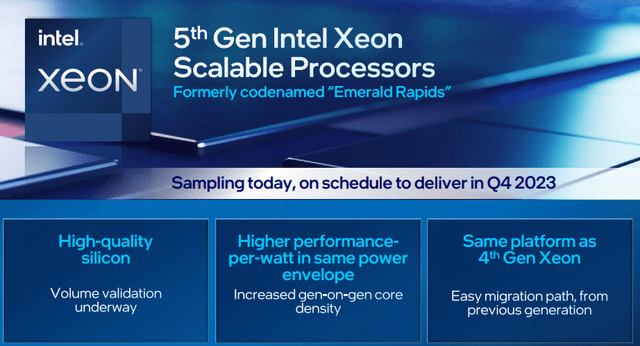

The primary product, Intel’s next-generation Xeon and a follow-on to Sapphire Rapids known as Emerald Rapids, is now sampling and can begin transport in This fall (picture under).

Emerald Rapids is an incremental replace over Sapphire Rapids. Whereas being socket appropriate, the primary change is that what was beforehand 4 die resolution is now revised right into a 2 die resolution. Observe that this integration goes in the wrong way of the pattern in the direction of smaller dies and chiplets. The almost certainly causes for the combination are that Intel has discovered the EMIB packaging on Sapphire Rapids costly and the Firm doesn’t have an equal of AMD’s Infinity Cloth prepared but to go the chiplet resolution route.

This compromised resolution is anticipated to have 66 cores throughout each the dies – extra cores than Sapphire Rapids however far lower than Superior Micro Gadgets’ (AMD) Genoa’s 96 cores. In different phrases, Emerald Rapids probably improves Intel’s aggressive place barely however falls nicely in need of AMD’s equal.

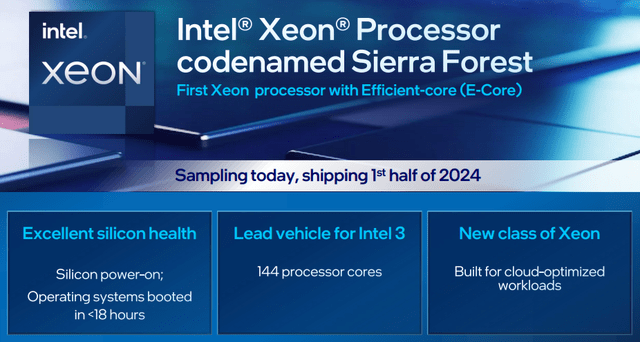

The following product within the roadmap is a brand new E-Core server chip that competes with AMD’s Bergamo (picture under).

Sierra Forest is anticipated to be the primary product on the Intel 3 course of. That makes it an especially high-risk product particularly when one considers that Intel is barely transport the Intel 7 course of now and has pointed to the excessive price of Intel 7 as a cause for a number of the current margin crunch. For the reason that product is sampling, one might technically declare that the method works. However it’s a good distance from a prototype to manufacturing on a brand-new course of.

Even assuming that course of shouldn’t be an issue, readers must be conscious that Sierra Forest has 144 cores however doesn’t help simultaneous-multi-threading, or SMT. AMD’s Bergamo, then again, has 128 cores however has SMT and, consequently, helps 256 threads. Bergamo is anticipated to be transport in H2 2023 and has no course of threat – on condition that TSMC (TSM) has been in manufacturing with its N5 course of for about 3 years now.

E-Core that Intel utilized in Sierra Forest is a diminished model of the P-Core and thus much less performant than the Zen 4c cores utilized in Bergamo. This makes it probably that Bergamo will beat Sierra Forest to market, help extra threads, and be extra performant in a number of functions.

In different phrases, Sierra Forest, arriving in H1 2024 won’t assist regain Intel’s competitiveness. Though it might assist Intel slender the aggressive product hole on the server aspect.

The following product on the roadmap, Granite Rapids, didn’t get a lot dialogue however seems to be a mid-2024 or H2 2024 product. By this time, AMD would probably be transport its next-generation Zen 5 product line. Reaching product management in that timeframe appears uncertain. Time will inform if Granite Rapids helps Intel slender the hole with AMD.

This takes us to the ultimate product on the roadmap – Clearwater Forest product in 2025. This product is being focused for 18A course of which Intel expects will probably be its management course of. If Intel can ship on this promise, is conceivable that, for the primary time in a few years, Intel might regain product management – this after all assumes that AMD fails to subject a aggressive product in that timeframe.

Not A lot To Write Residence With Non-CPU Roadmap

The remainder of the roadmap, in GPUs, FPGAs, and accelerators doesn’t present something notably spectacular for 2023 or 2034 (picture under).

Habana Gaudi appears to be a compelling product however principally compared to Nvidia’s A100 household. With Nvidia beginning H100 shipments final quarter, it would take Gaudi 3 for Intel to have an opportunity at management. And Gaudi 3 shouldn’t be anticipated to reach till 2024. This, when coupled with the already identified cancellation of some GPU packages, means that Intel will probably be relegated to the decrease finish of the accelerator marketplace for the following couple of years and compete primarily in opposition to Nvidia’s A100 and AMD’s MI250. Intel’s wins in opposition to H100 or MI-300 era will probably be uncommon.

Whereas Intel centered a very good bit of debate through the replace on how its OneAPI effort which makes creating functions seamless throughout CPUs, GPUs, FPGAs, and accelerators, the problem is that it is going to be tough to win sockets in opposition to a robust incumbent like Nvidia with out providing compelling GPU/accelerator efficiency. So, the software program story shouldn’t be more likely to have a lot traction till Intel’s {hardware} is aggressive – which doesn’t seem probably till 2025 or later.

Administration acknowledged that Intel is the quantity chief within the IPU market with our FPGAs, Xeon D, and ethernet parts being deployed in 6 out of the highest 8 hyperscalers. Whereas this can be true, with regards to the newest era DPU merchandise, Nvidia’s Bluefield and AMD’s Pensando options appear to be profitable a number of sockets in opposition to Intel’s Mount Evans different.

Abstract

The most important information, and the investor enthusiasm, has to do with the truth that after years of fielding non-competitive roadmaps, Intel has put ahead a extra aggressive roadmap. However there’s a number of distinction between displaying a roadmap and delivering merchandise. Even assuming Intel can execute per the disclosed roadmaps, management merchandise from Intel will probably be absent till 2025 or later.

What might save Intel on this high-risk enterprise is no matter silicon it’s outsourcing to TSMC. If the silicon is just like what Intel is creating on its inner nodes, the danger of the merchandise being available in the market can be diminished, though these merchandise would nonetheless be a lot increased price in comparison with AMD merchandise based mostly on small die chiplets.

All issues thought of, the optimism that induced the inventory run-up in the previous couple of days is, at greatest, untimely.